This is shown in the R code as maxResults.Īs for the sort, the landing page defaulted by most “Popular”. To confirm this, I changed the URL directly and that is indeed where the last review falls off.

With 1,112 total reviews (and no option to increase the number of results per page), I figured results would max out at “_P112” (i.e., round 1,112 up to the tenth which is 1,120 / 10 = 112). Clicking to page 2 changes the URL so that “_P2” is added towards the endpoint. The landing page will be: and it includes the first 10 results. I explored how the URL changed as I clicked through the page results and applied different sorting. The first line of order is to know how to access the data online. Here’s an “unwrapped” version of the function’s code gist that will get the same results.īefore we go over what’s going on here, there’s two things we have to figure out: 1) how the data can be accessed, and 2) how the data is structured in HTML. Specifically, it relies on the rvest, httr, xml2, and purrr packages. Easy, right? Well, when we decompose it, we’ll see that it’s basically just a wrapper function for other packages that do all the heavy lifting to make the scraping process easy through an HTML parsing method.

The gdscrapeR scraper uses one function - get_reviews() - and you pass the companyNum parameter through it. Is this some sort of magic? Nope, it’s gdscrapeR! We now have a dataframe with 1,112 rows - one for each reviewer - that can be exported to a CSV file. What’s going on here? One moment, I was data-less, and then a few minutes later, BOOM! - a table appeared. Now, we can get these text data into a table with a few lines of code using gdscrapeR: For SpaceX, the company number in “is “E40371”. It’s identified as all the characters between “Reviews-“ and “.htm” (usually starts with a letter(s) and followed by up to seven digits).

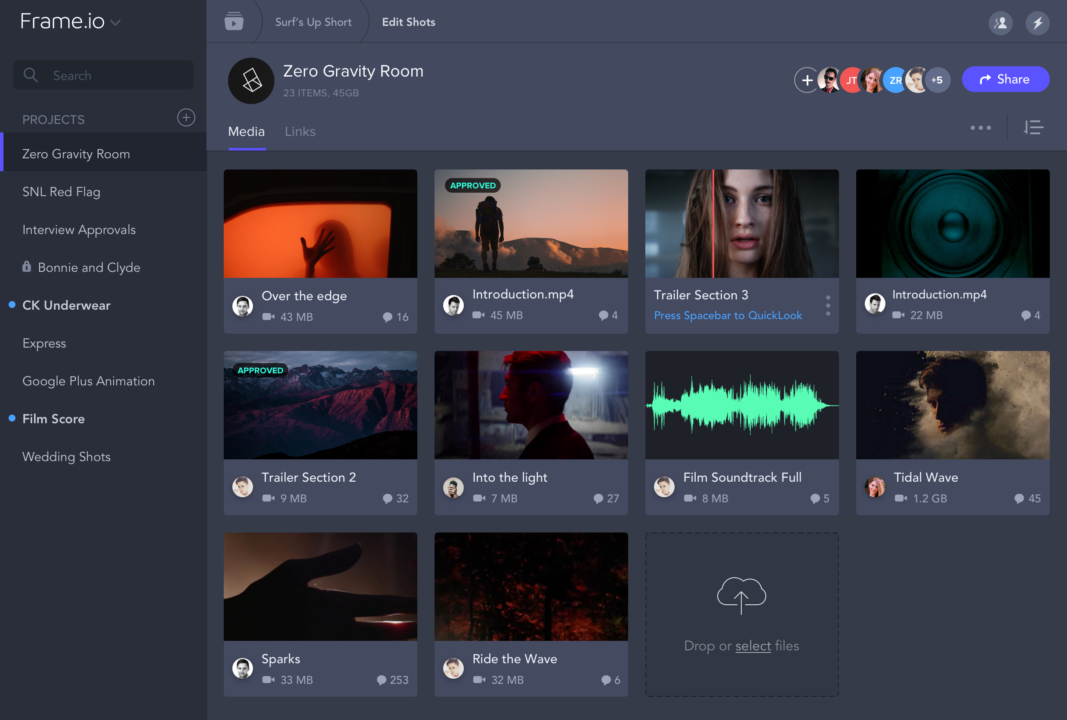

We just need to note the company’s unique Glassdoor ID number, which is found in the URL. Helpful - count marked as being helpful, if any Title - e.g., “Current Employee - Manager in Hawthorne, CA” Rating - overall star rating between 1.0 and 5.0 The company currently has 1,112 total reviews, so with 10 reviews per results page, we’ll be scraping across 112 pages for the following: Let’s say we want to scrape text data from the company reviews for SpaceX. In this case, the answer is simple - Glassdoor has an API available, but not for the contents I’m after: the company reviews. Although most websites are developer-friendly and provide an Application Programming Interface (API) for obtaining data, there are various reasons why it may not be a better option over web scraping. “Why not use the API instead of scraping,” you ask? I think all skilled data analysts should have some scraping tools because there’s so many possibilities in harvesting an abundance of data from the wide open spaces of the Internet. It’s a way to build your own dataset that doesn’t involve cramped fingers from Ctrl+C and Ctrl+V. Web scraping is a technique of automatically mining information from a website.

I admit I’ve done this plenty of times before and I hope not to ever have to again. HTML is in an unstructured format and no one wants to manually “copy and paste” into a spreadsheet to gather the data. If we’re lucky, it’s neatly packaged and readily available, but that is hardly ever the case. Go straight here if you’re looking for the R package files.Īnalysts like to look out for interesting datasets as they browse the web. Most things on the web can be scraped and there’s many methods to do so, but did you know R has these capabilities? I’ve had a few folks ask me how and having automated the web scraping for Glassdoor company reviews with the gdscrapeR package, I’ll demo its usage as an example.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed